Acoustic Matching by Embedding Impulse Responses

ICASSP 2020 (to appear), May 2020

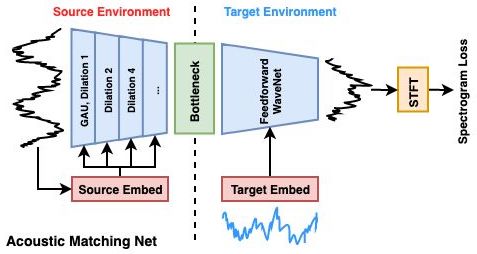

The acoustic matching network takes in the input waveform of a source environment as well as a reference waveform (in blue) of a target environment, and maps to a waveform in the target environment.

Abstract

The goal of acoustic matching is to transform an audio recording made in one acoustic environment to sound as if it had been recorded in a different environment, based on reference audio from the target environment. This paper introduces a deep learning solution for two parts of the acoustic matching problem. First, we characterize acoustic environments by mapping audio into a low-dimensional embedding invariant to speech content and speaker identity. Next, a waveform-to-waveform neural network conditioned on this embedding learns to transform an input waveform to match the acoustic qualities encoded in the target embedding. Listening tests on both simulated and real environments show that the proposed approach improves on state-of-the-art baseline methods.

Links

- Paper (268k PDF)

- Sound Clips

Citation

Jiaqi Su, Zeyu Jin, and Adam Finkelstein.

"Acoustic Matching by Embedding Impulse Responses."

ICASSP 2020 (to appear), May 2020.

BibTeX

@inproceedings{Su:2020:AMB,

author = "Jiaqi Su and Zeyu Jin and Adam Finkelstein",

title = "Acoustic Matching by Embedding Impulse Responses",

booktitle = "ICASSP 2020 (to appear)",

year = "2020",

month = may

}