PairedCycleGAN: Asymmetric Style Transfer for Applying and Removing Makeup

CVPR 2018, June 2018

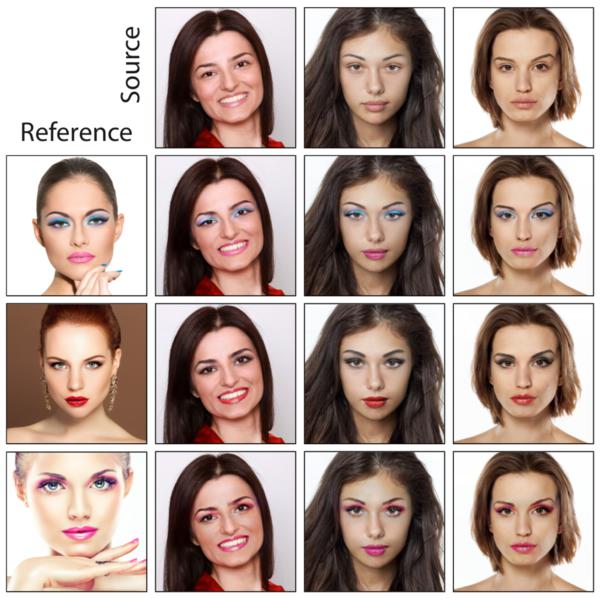

Three source photos (top row) are each modified to match makeup styles in three reference photos (left column) to produce nine different outputs (3 × 3 lower right).

Abstract

We introduce an automatic method for editing a portrait photo so that the subject appears to be wearing makeup in the style of another person in a reference photo. Our unsupervised learning approach relies on a new framework of cycle-consistent generative adversarial networks. Different from the image domain transfer problem, our style transfer problem involves two asymmetric functions: a forward function encodes example-based style transfer, whereas a backward function removes the style. We construct two coupled networks to implement these functions – one that transfers makeup style and a second that can remove makeup – such that the output of their successive application to an input photo will match the input. The learned style network can then quickly apply an arbitrary makeup style to an arbitrary photo. We demonstrate the effectiveness on a broad range of portraits and styles.

Downloads

- Paper 5MB PDF

Citation

Huiwen Chang, Jingwan Lu, Fisher Yu, and Adam Finkelstein.

"PairedCycleGAN: Asymmetric Style Transfer for Applying and Removing Makeup."

CVPR 2018, June 2018.

BibTeX

@inproceedings{Chang:2018:PAS,

author = "Huiwen Chang and Jingwan Lu and Fisher Yu and Adam Finkelstein",

title = "{PairedCycleGAN}: Asymmetric Style Transfer for Applying and Removing

Makeup",

booktitle = "CVPR 2018",

year = "2018",

month = jun

}