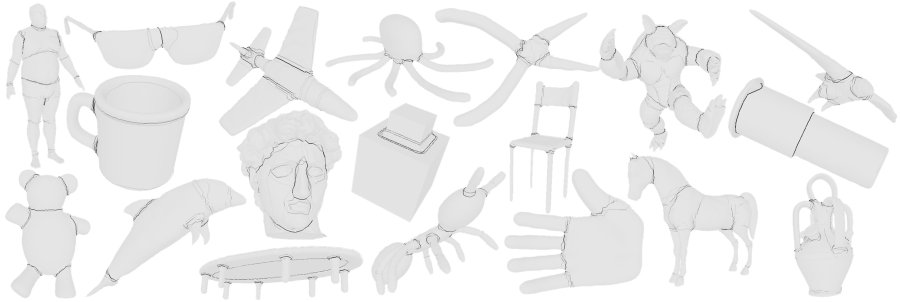

On the low end of the spectrum, we have been investigating shape distributions that represent the shape of a 3D model as a probability distribution sampled from a shape function measuring geometric properties of a 3D model. The motivation for this approach is that samples can be computed quickly and easily, and the shape of the resulting distributions are invariant to similarity transformations, noise, tessellation, cracks, etc.(with a normalization step for scale invariance). For example, one such shape distribution, which we call D2, represents the global shape of an object by the probability distribution of Euclidean distances between pairs of randomly selected points on its surface. The figure below shows the D2 distributions for 5 tanks (gray curves) and 6 cars (black curves). Note how the classes are distinguishable.